5 Exploring quantitative data

This chapter focuses on exploring quantitative data using summary statistics and visualizations. The summaries and graphs presented in this chapter are created using statistical software; however, since this might be your first exposure to the concepts, we take our time in this chapter to detail how to create them. Mastery of the content presented in this chapter will be crucial for understanding the methods and techniques introduced in the rest of the book.

Consider the loan_amount variable from the loan50 data set, which represents the loan size for all 50 loans in the data set.

This variable is quantitative (or numerical) since we can sensibly discuss the numerical difference of the size of two loans.

On the other hand, area codes and zip codes are not quantitative, but rather they are categorical variables.

Throughout this chapter, we will apply these methods using the loan50, county, and email50 data sets, which were introduced in Section 1.2.

If you’d like to review the variables from either data set, see Tables 1.4 and 1.6.

The loan50 and email50 data sets can be found in the openintro package.

The county data can be found in the usdata package.

5.1 Scatterplots for paired data

A scatterplot provides a case-by-case view of data for two quantitative variables.

In Figure 1.4, a scatterplot was used to examine the homeownership rate against the fraction of housing units that were part of multi-unit properties (e.g. apartments) in the county data set.

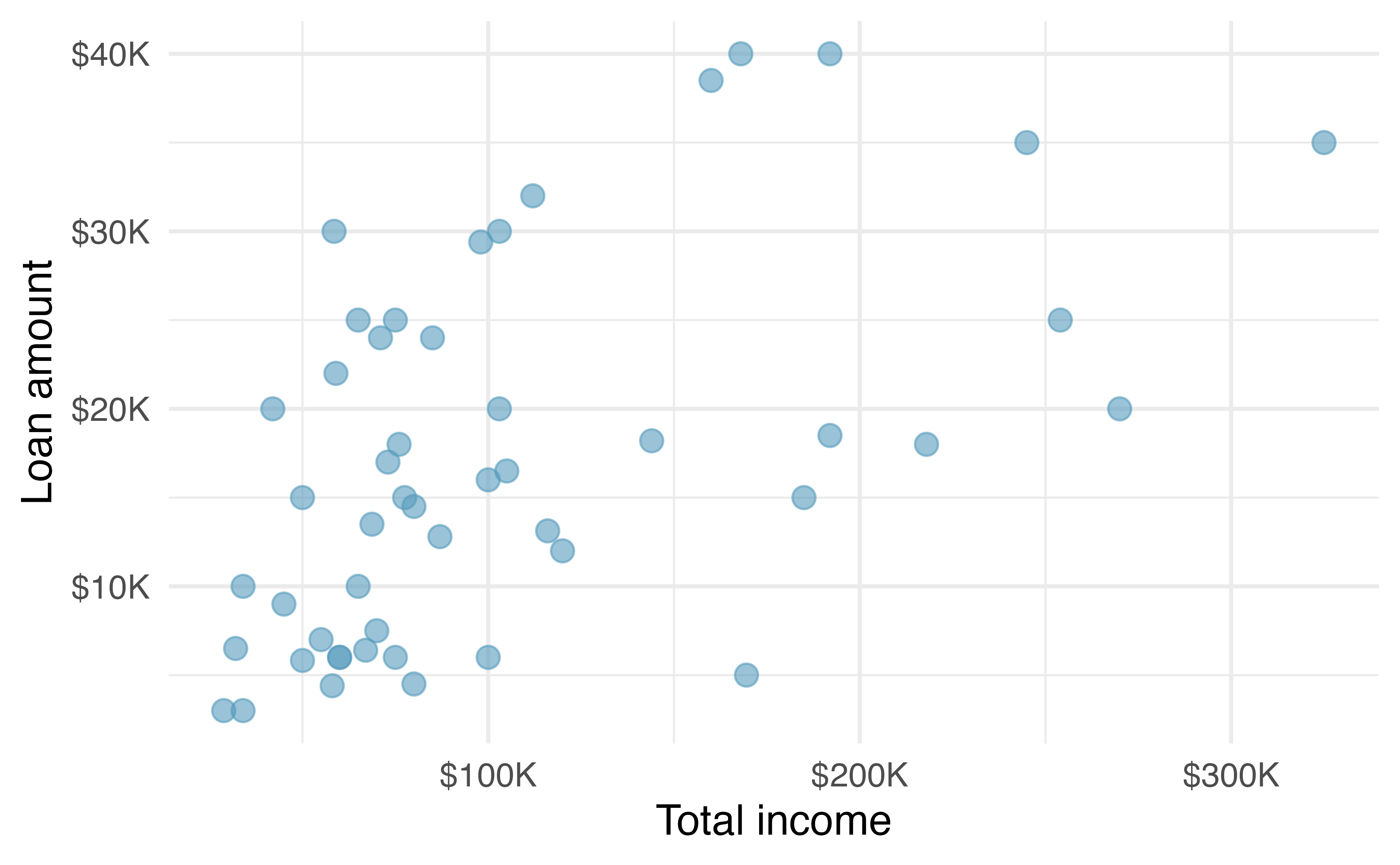

Another scatterplot is shown in Figure 5.1, comparing the total income of a borrower total_income and the amount they borrowed loan_amount for the loan50 data set.

In any scatterplot, each point represents a single case.

Since there are 50 cases in loan50, there are 50 points in Figure 5.1.

When examining scatterplots, we describe four features:

- Form - If you were to trace the trend of the points, would the trend be linear or nonlinear?

- Direction - As values on the x-axis increase, do the y-values tend to increase (positive direction) or do they decrease (negative direction)?

- Strength - How closely do the points follow a trend?

- Unusual observations or outliers- Are there any unusual observations that do not seem to match the overall pattern of the scatterplot?

Figure 5.1: A scatterplot of loan_amount versus total_income for the loan50 data set.

Looking at Figure 5.1, we see that there are many borrowers with income below $100,000 on the left side of the graph, while there are a handful of borrowers with income above $250,000. The loan amounts vary from below $10,000 to around $40,000. The data seem to have a linear form, though the relationship between the two variables is quite weak. The direction is positive—as total income increases, the loan amount also tends to increase—and there may be a few unusual observations in the higher income range, though since the relationship is weak, it is hard to tell.

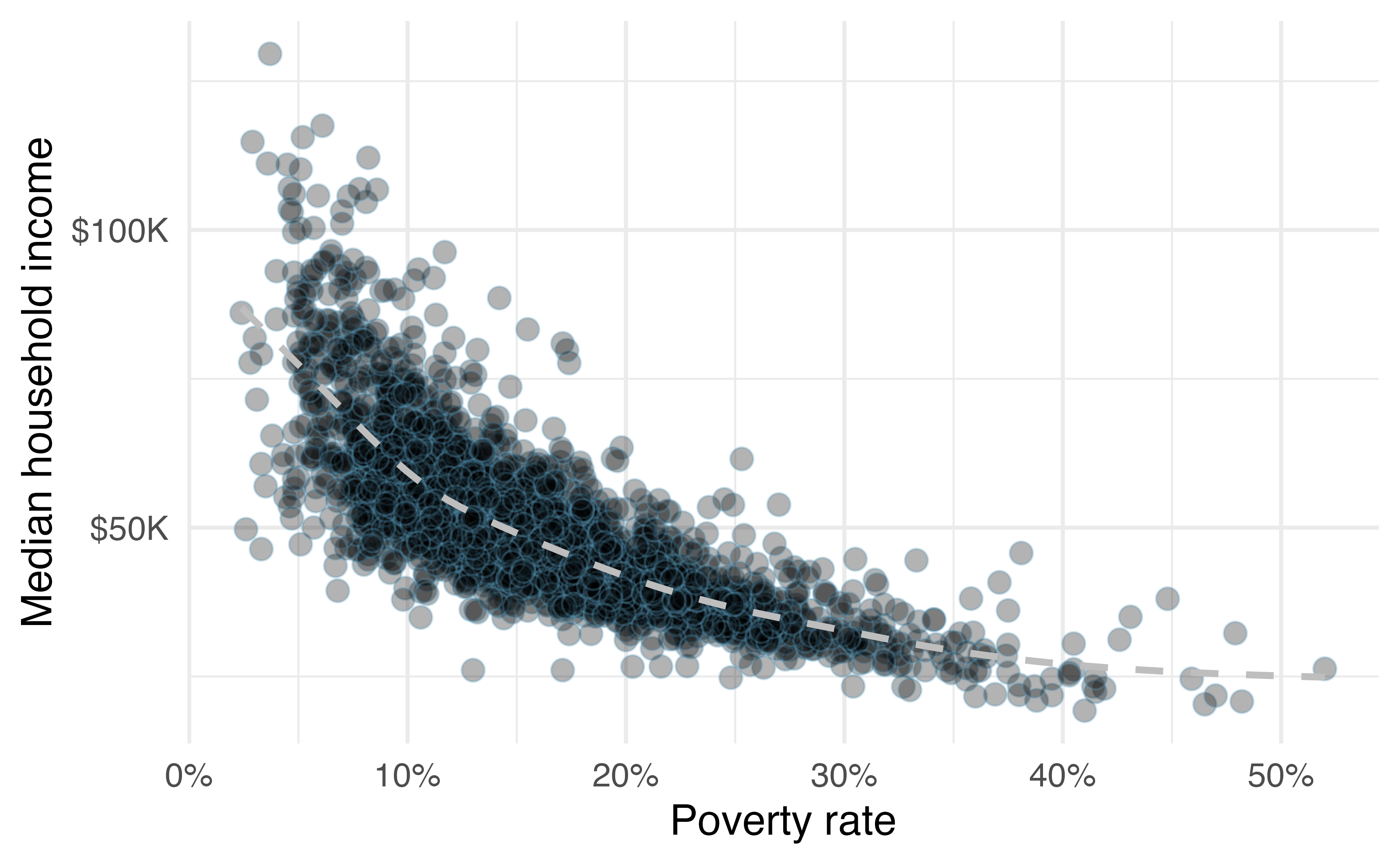

Figure 5.2: A scatterplot of the median household income against the poverty rate for the county data set. Data are from 2017. A statistical model has also been fit to the data and is shown as a dashed line.

Figure 5.2 shows a plot of median household income against the poverty rate for 3,142 counties. What can be said about the relationship between these variables?

The relationship is evidently nonlinear, as highlighted by the dashed line. This is different from previous scatterplots we have seen, which show relationships that do not show much, if any, curvature in the trend. The relationship is moderate to strong, the direction is negative, and there does not appear to be any unusual observations.

What do scatterplots reveal about the data, and how are they useful?37

Describe two variables that would have a horseshoe-shaped association in a scatterplot (\(\cap\) or \(\frown\))38

5.2 Dot plots and the mean

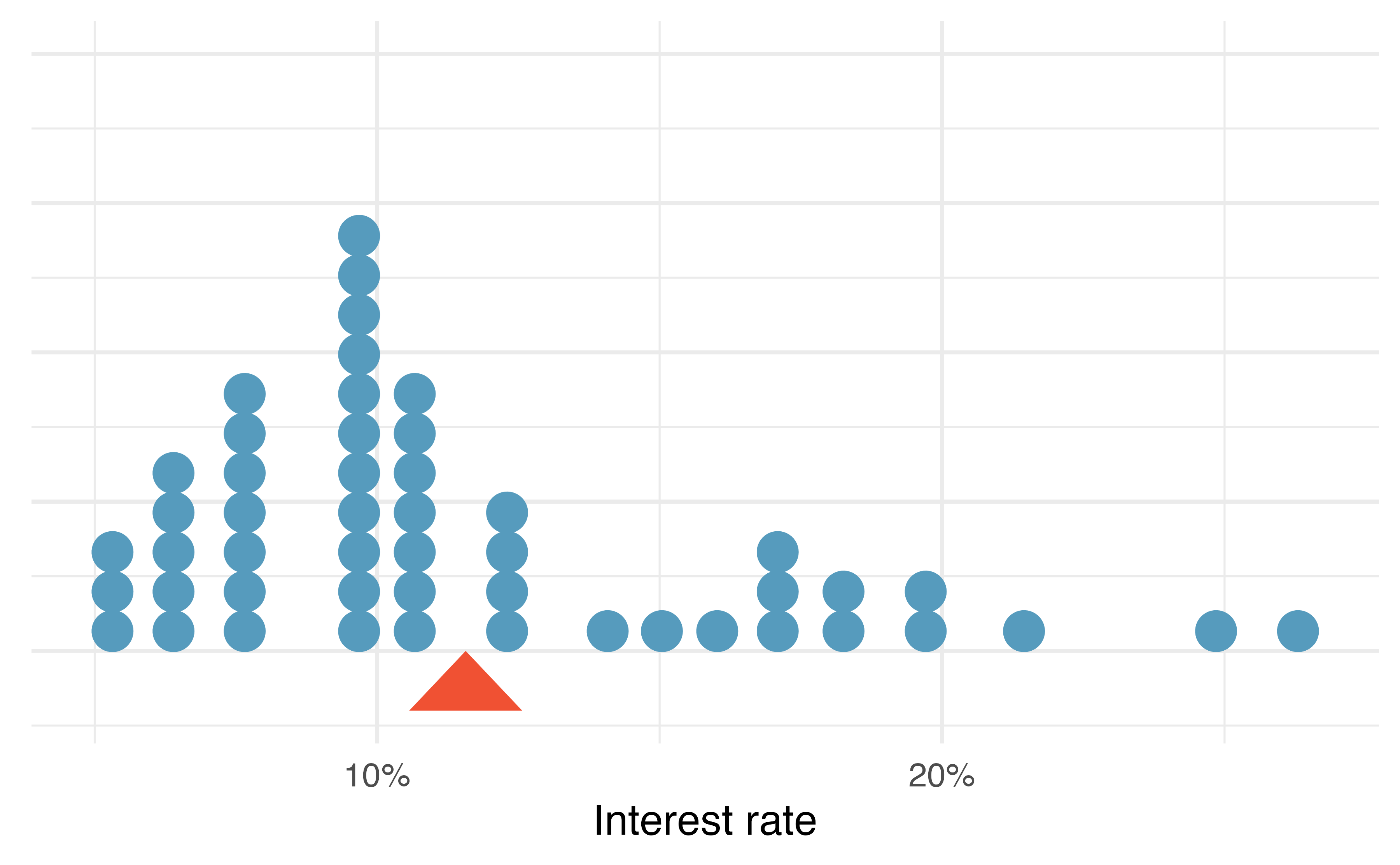

Sometimes we are interested in the distribution of a single variable. In these cases, a dot plot provides the most basic of displays. A dot plot is a one-variable scatterplot; an example using the interest rate of 50 loans is shown in Figure 5.3.

Figure 5.3: A dot plot of interest_rate for the loan50 data set. The rates have been rounded to the nearest percent in this plot, and the distribution’s mean is shown as a red triangle.

The distribution of a variable is a description of the possible values it takes and how frequently each value occurs. The mean, often called the average, is a common way to measure the center of a distribution of data. To compute the mean interest rate of the 50 loans above, we add up all the interest rates and divide by the number of observations.

The sample mean is often labeled \(\bar{x}\). The letter \(x\) is being used as a generic placeholder for the variable and the bar over the \(x\) communicates we’re looking at the average of that variable. In our example \(x\) would represent interest rate, and \(\bar{x}\) = 11.57%. It is useful to think of the mean as the balancing point of the distribution39, and it’s shown as a triangle in Figure 5.3.

Mean.

The sample mean can be calculated as the sum of the observed values divided by the number of observations:

\[ \bar{x} = \frac{x_1 + x_2 + \cdots + x_n}{n} \]

Examine the equation for the mean. What does \(x_1\) correspond to? And \(x_2\) Can you infer a general meaning to what \(x_i\) might represent?40

What was \(n\) in this sample of loans?41

The loan50 data set represents a sample from a larger population of loans made through Lending Club.

We could compute a mean for this population in the same way as the sample mean.

However, the population mean has a special label: \(\mu\).

The symbol \(\mu\) is the Greek letter mu and represents the average of all observations in the population.

Sometimes a subscript, such as \(_x\), is used to represent which variable the population mean refers to, e.g., \(\mu_x\).

Often times it is too expensive or time consuming to measure the population mean precisely, so we often estimate \(\mu\) using the sample mean, \(\bar{x}\).

The Greek letter \(\mu\) is pronounced mu, listen to the pronunciation here.

The average interest rate across all loans in the population can be estimated using the sample data. Based on the sample of 50 loans, what would be a reasonable estimate of \(\mu_x\), the mean interest rate for all loans in the full data set?

The sample mean, 11.57%, provides a rough estimate of \(\mu_x\). While it is not perfect, this statistic our single best guess point estimate of the average interest rate of all the loans in the population under study, the parameter. In Chapter 9 and beyond, we will develop tools to characterize the accuracy of point estimates, like the sample mean. As you might have guessed, point estimates based on larger samples tend to be more accurate than those based on smaller samples.

The mean is useful for making comparisons across different samples that may have different sample sizes because it allows us to rescale or standardize a metric into something more easily interpretable and comparable.

Suppose we would like to understand if a new drug is more effective at treating asthma attacks than the standard drug. A trial of 1500 adults is set up, where 500 receive the new drug, and 1000 receive a standard drug in the control group:

| New drug | Standard drug | |

|---|---|---|

| Number of patients | 500 | 1000 |

| Total asthma attacks | 200 | 300 |

Comparing the raw counts of 200 to 300 asthma attacks would make it appear that the new drug is better, but this is an artifact of the imbalanced group sizes. Instead, we should look at the average number of asthma attacks per patient in each group:

- New drug: \(200 / 500 = 0.4\) asthma attacks per patient

- Standard drug: \(300 / 1000 = 0.3\) asthma attacks per patient

The standard drug has a lower average number of asthma attacks per patient than the average in the treatment group.

Emilio opened a food truck last year where he sells burritos, and his business has stabilized over the last 4 months. Over that 4 month period, he has made $11,000 while working 625 hours. Emilio’s competition, Francis, has made $13,000 over the last 4 months while working 800 hours. Francis brags to Emilio that her business is more profitable. Is Francis’ claim warranted?

Emilio’s average hourly earnings provide a useful statistic for evaluating how much his venture is, at least from a financial perspective, worth:

\[ \frac{\$11000}{625\text{ hours}} = \$17.60\text{ per hour} \]

By knowing his average hourly wage, Emilio now has put his earnings into a standard unit that is easier to compare with many other jobs that he might consider.

In comparison, Francis’ average hourly wage was

\[ \frac{\$13000}{800\text{ hours}} = \$16.25\text{ per hour} \]

Thus, while Francis’ total earnings were larger than Emilio’s, when standardizing by hour, Francis shouldn’t brag.

Suppose we want to compute the average income per person in the US. To do so, we might first think to take the mean of the per capita incomes across the 3,142 counties in the data set. What would be a better approach?

The county data set is special in that each county actually represents many individual people.

If we were to simply average across the income variable, we would be treating counties with 5,000 and 5,000,000 residents equally in the calculations.

Instead, we should compute the total income for each county, add up all the counties’ totals, and then divide by the number of people in all the counties.

If we completed these steps with the data, we would find that the per capita income for the US is $30,861.

Had we computed the simple mean of per capita income across counties, the result would have been just $26,093!

This example used what is called a weighted mean. For more information on this topic, check out the following online supplement regarding weighted means.

5.3 Histograms and shape

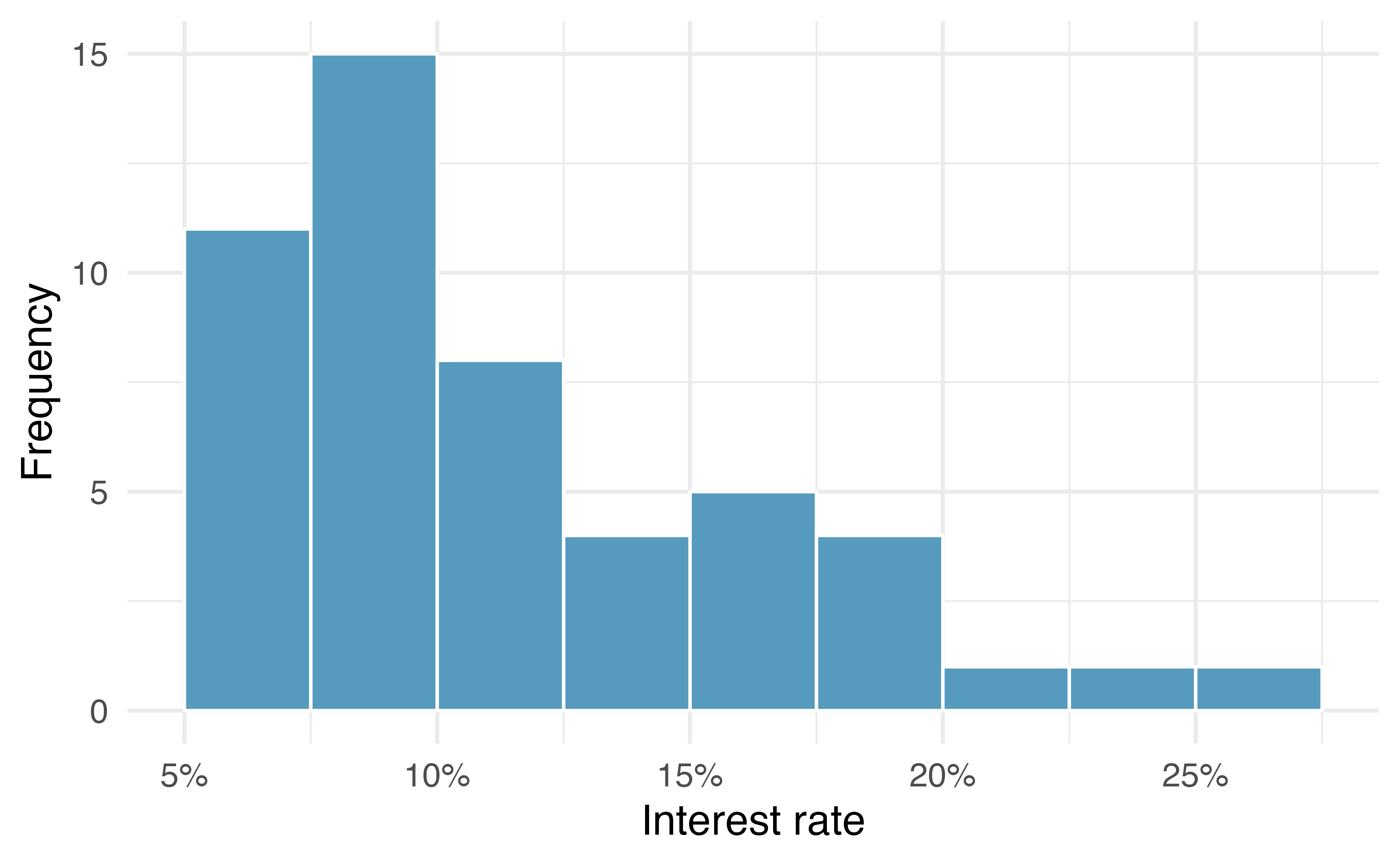

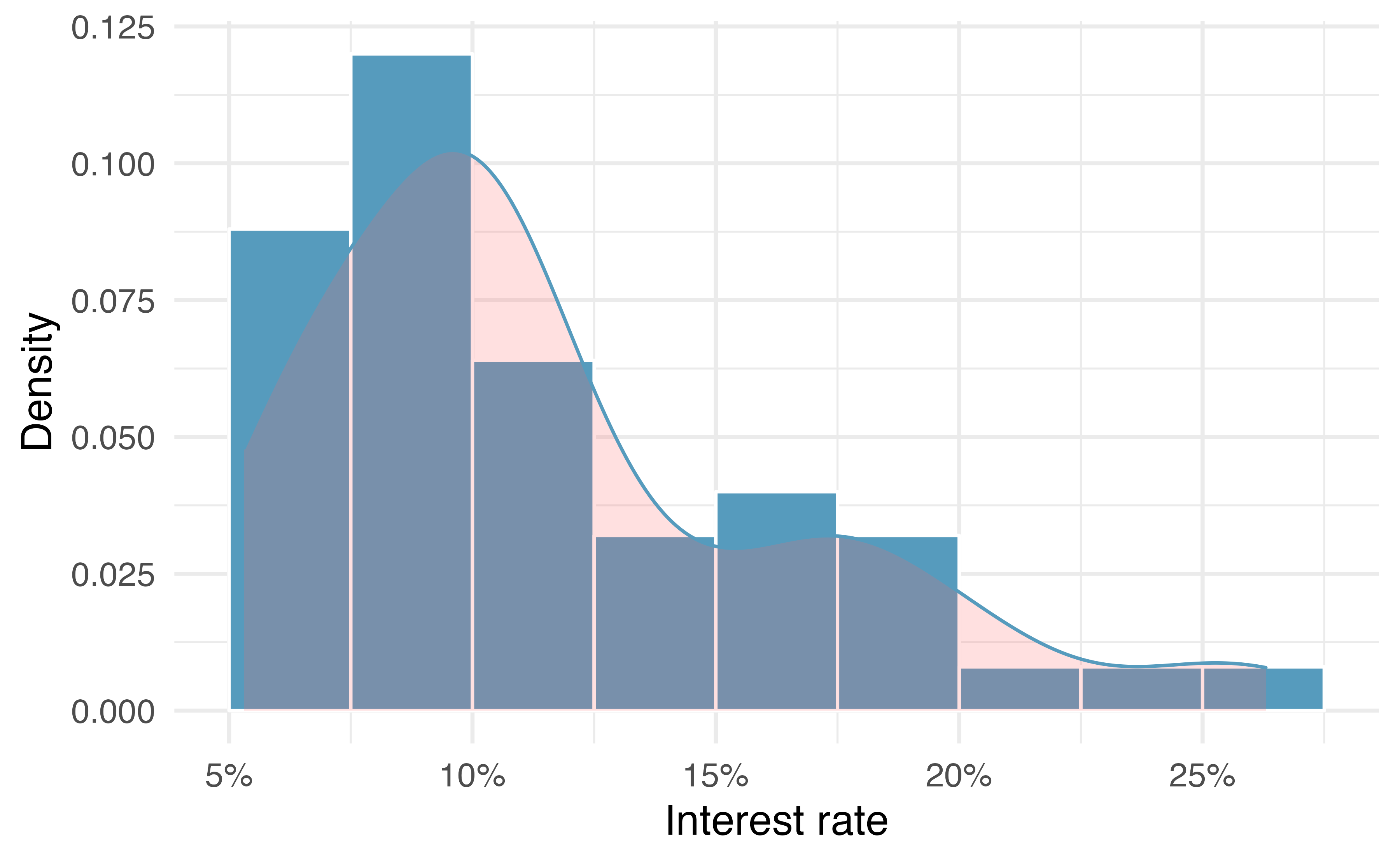

Dot plots show the exact value for each observation. This is useful for small data sets, but they can become hard to read with larger samples. Rather than showing the value of each observation, we prefer to think of the value as belonging to a bin. For example, in the loan50 data set, we created a table of counts for the number of loans with interest rates between 5.0% and 7.5%, then the number of loans with rates between 7.5% and 10.0%, and so on. Observations that fall on the boundary of a bin (e.g., 10.00%) are allocated to the lower bin. This tabulation is shown in Table 5.1. These binned counts are plotted as bars in Figure 5.4 into what is called a histogram, which resembles a more heavily binned version of the stacked dot plot shown in Figure 5.3.

| Interest rate | 5% - 7.5% | 7.5% - 10% | 10% - 12.5% | 12.5% - 15% | 15% - 17.5% | 17.5% - 20% | 20% - 22.5% | 22.5% - 25% | 25% - 27.5% |

|---|---|---|---|---|---|---|---|---|---|

| n | 11 | 15 | 8 | 4 | 5 | 4 | 1 | 1 | 1 |

Figure 5.4: A histogram of interest_rate. This distribution is strongly skewed to the right.

Histograms provide a view of the data density. Higher bars represent where the data are relatively more common, or “dense.” For instance, there are many more loans with rates between 5% and 10% than loans with rates between 20% and 25% in the data set. The bars make it easy to see how the density of the data changes relative to the interest rate.

Histograms are especially convenient for understanding the shape of the data distribution. Figure 5.4 suggests that most loans have rates under 15%, while only a handful of loans have rates above 20%. When data trail off to the right in this way and has a longer right tail, the shape is said to be right skewed42

Data sets with the reverse characteristic—a long, thinner tail to the left—are said to be left skewed. We also say that such a distribution has a long left tail. Data sets that show roughly equal trailing off in both directions are called symmetric.

When data trail off in one direction, the distribution has a long tail. If a distribution has a long left tail, it is left skewed or negatively skewed. If a distribution has a long right tail, it is right skewed or positively skewed.

Besides the mean (since it was labeled), what can you see in the dot plot in Figure 5.3 that you cannot see in the histogram in Figure 5.4?43

In addition to looking at whether a distribution is skewed or symmetric, histograms can be used to identify modes. A mode is represented by a prominent peak in the distribution. There is only one prominent peak in the histogram of interest_rate.

A definition of mode sometimes taught in math classes is the value with the most occurrences in the data set. However, for many real-world data sets, it is common to have no observations with the same value in a data set, making this definition impractical in data analysis.

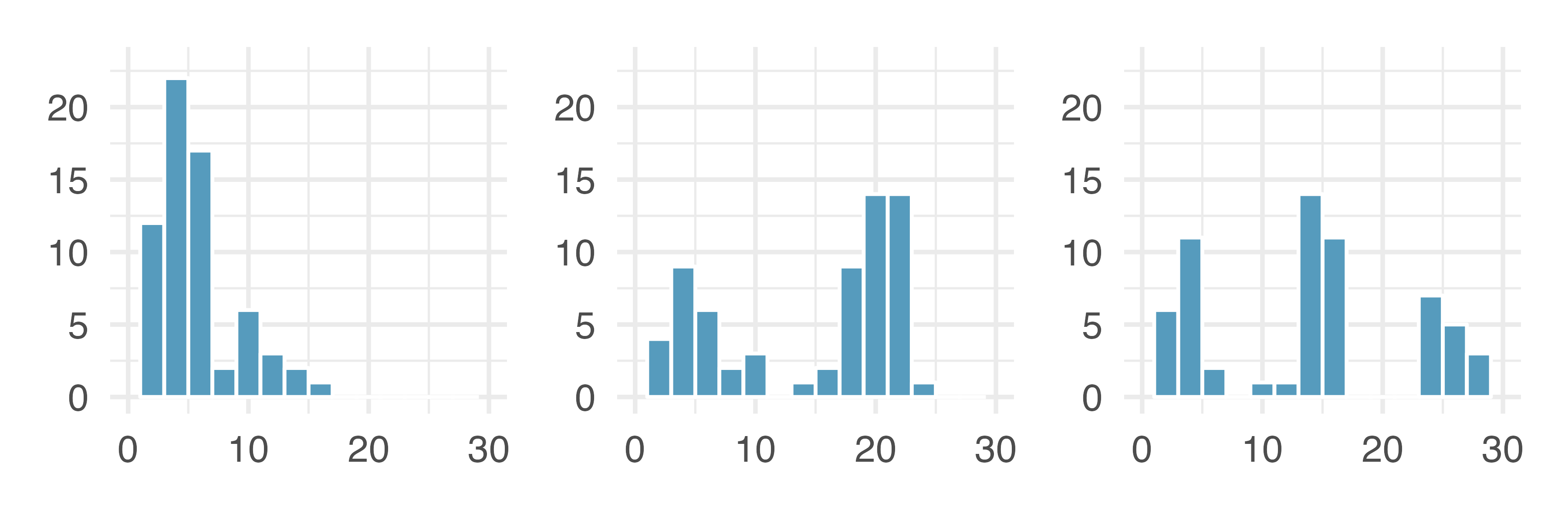

Figure 5.5 shows histograms that have one, two, or three prominent peaks. Such distributions are called unimodal, bimodal, and multimodal, respectively. Any distribution with more than two prominent peaks is called multimodal. Notice that there was one prominent peak in the unimodal distribution with a second less prominent peak that was not counted since it only differs from its neighboring bins by a few observations.

Figure 5.5: Counting only prominent peaks, the distributions are (left to right) unimodal, bimodal, and multimodal. Note that the left plot is unimodal because we are counting prominent peaks, not just any peak.

Figure 5.4 reveals only one prominent mode in the interest rate. Is the distribution unimodal, bimodal, or multimodal?44

Height measurements of young students and adult teachers at a K-3 elementary school were taken. How many modes would you expect in this height data set?45.

Looking for modes isn’t about finding a clear and correct answer about the number of modes in a distribution, which is why prominent is not rigorously defined in this book. The most important part of this examination is to better understand your data.

Another type of plot that is helpful for exploring the shape of a distribution is a smoothed histogram, called a density plot. A density plot will scale the \(y\)-axis so that the total area under the density curve is equal to one. This allows us to get a sense of what proportion of the data lie in a certain interval, rather than the frequency of data in the interval. We can change the scale of a histogram to plot proportions rather than frequencies, then overlay a density curve on this rescaled histogram, as seen in Figure 5.6.

Figure 5.6: A density plot of interest_rate overlayed on a histogram using density scale.

5.4 Variance and standard deviation

The mean was introduced as a method to describe the center of a data set, and variability in the data is also important. Here, we introduce two measures of variability: the variance and the standard deviation. Both of these are very useful in data analysis, even though their formulas are a bit tedious to calculate by hand. The standard deviation is the easier of the two to comprehend, and it roughly describes how far away the typical observation is from the mean.

We call the distance of an observation from its mean its deviation. Below are the deviations for the \(1^{st}\), \(2^{nd}\), \(3^{rd}\), and \(50^{th}\) observations in the interest_rate variable:

\[ x_1 - \bar{x} = 10.9 - 11.57 = -0.67 \] \[ x_2 - \bar{x} = 9.92 - 11.57 = -1.65 \] \[ x_3 - \bar{x} = 26.3 - 11.57 = 14.73 \] \[ \vdots \] \[ x_{50} - \bar{x} = 6.08 - 11.57 = -5.49 \]

If we square these deviations and then take an average, the result is equal to the sample variance, denoted by \(s^2\):

\[\begin{align*} s^2 &= \frac{(-0.67)^2 + (-1.65)^2 + (14.73)^2 + \cdots + (-5.49)^2}{50 - 1} \\ &= \frac{0.45 + 2.72 + \cdots + 30.14}{49} \\ &= 25.52 \end{align*}\]

We divide by \(n - 1\), rather than dividing by \(n\), when computing a sample’s variance; there’s some mathematical nuance here, but the end result is that doing this makes this statistic slightly more reliable and useful.

Notice that squaring the deviations does two things. First, it makes large values relatively much larger. Second, it gets rid of any negative signs.

The standard deviation is defined as the square root of the variance:

\[ s = \sqrt{25.52} = 5.05 \]

While often omitted, a subscript of \(_x\) may be added to the variance and standard deviation, i.e., \(s_x^2\) and \(s_x\), if it is useful as a reminder that these are the variance and standard deviation of the observations represented by \(x_1\), \(x_2\), …, \(x_n\).

Variance and standard deviation.

The sample variance is the (near) average squared distance from the mean: \[ s^2 = \frac{((x_1 - \bar{x})^2 + (x_2 - \bar{x})^2 + \cdots + (x_n - \bar{x})^2)}{n-1} \] The sample standard deviation is the square root of the variance: \(s = \sqrt{s^2}\).

The standard deviation is useful when considering how far the data are distributed from the mean. The standard deviation represents the typical deviation of observations from the mean. If the distribution is bell-shaped, about 70% of the data will be within one standard deviation of the mean and about 95% will be within two standard deviations. However, these percentages do not necessarily hold for other shaped distributions!

Like the mean, the population values for variance and standard deviation have special symbols: \(\sigma^2\) for the variance and \(\sigma\) for the standard deviation.

The Greek letter \(\sigma\) is pronounced sigma, listen to the pronunciation here.

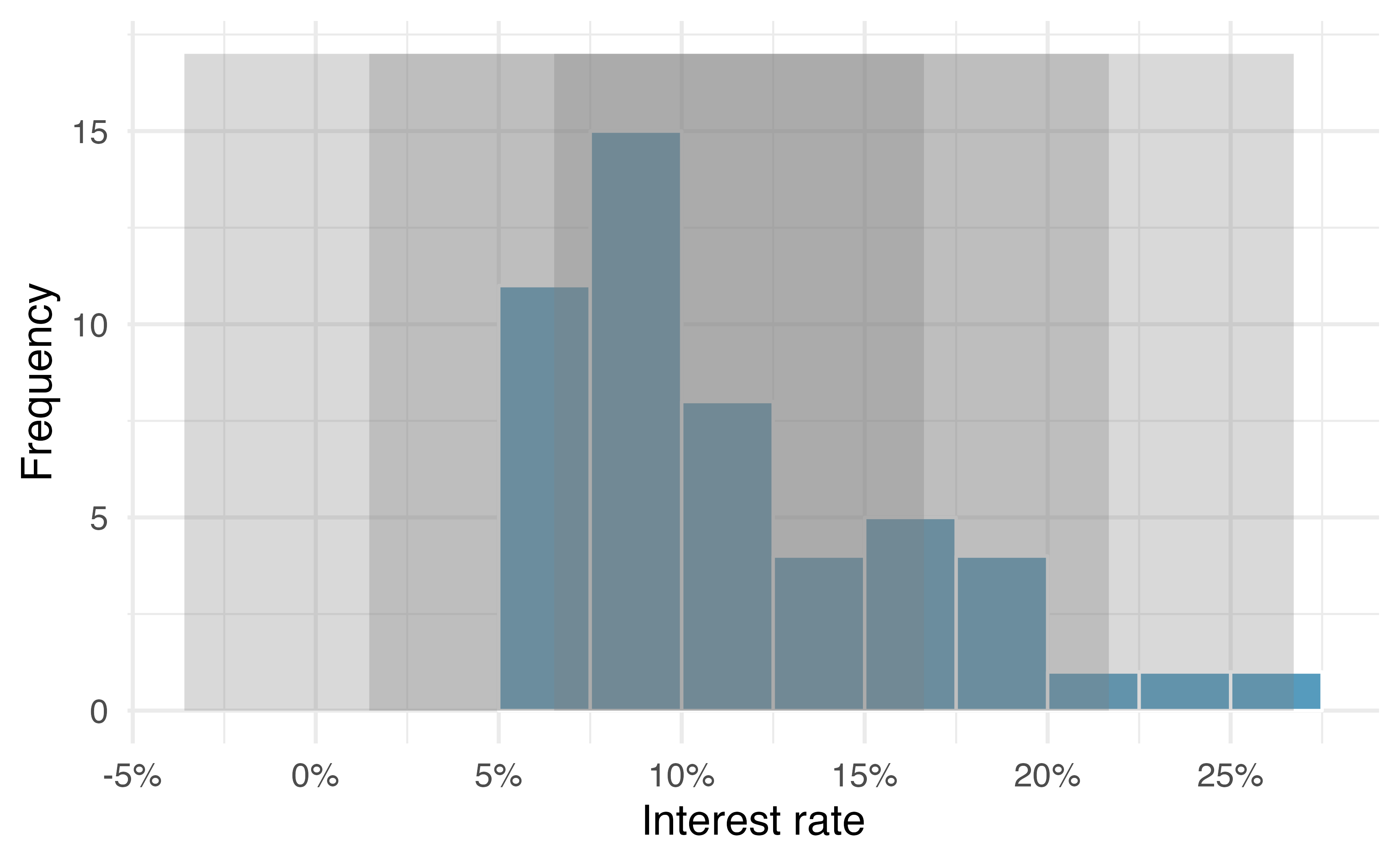

Figure 5.7: For the interest_rate variable, 34 of the 50 loans (68%) had interest rates within 1 standard deviation of the mean, and 48 of the 50 loans (96%) had rates within 2 standard deviations. Usually about 70% of the data are within 1 standard deviation of the mean and 95% within 2 standard deviations, though this is far from a hard rule.

Figure 5.8: Three very different population distributions with the same mean (0) and standard deviation (1).

A good description of the shape of a distribution should include modality and whether the distribution is symmetric or skewed to one side. Using Figure 5.8 as an example, explain why such a description is important.46

Describe the distribution of the interest_rate variable using the histogram in Figure 5.4.

The description should incorporate the center, variability, and shape of the distribution, and it should also be placed in context.

Also note any especially unusual cases.

The distribution of interest rates is unimodal and skewed to the high end. Many of the rates fall near the mean at 11.57%, and most fall within one standard deviation (5.05%) of the mean. There are a few exceptionally large interest rates in the sample that are above 20%.

In practice, the variance and standard deviation are sometimes used as a means to an end, where the “end” is being able to accurately estimate the uncertainty associated with a sample statistic. For example, in Chapter 17 the standard deviation is used in calculations that help us understand how much a sample mean varies from one sample to the next.

5.5 Box plots, quartiles, and the median

A box plot (or box-and-whisker plot) summarizes a data set using five statistics while

also identifying unusual observations. The five statistics—minimum, first quartile,

median, third quartile, maximum—together are called the five number summary.

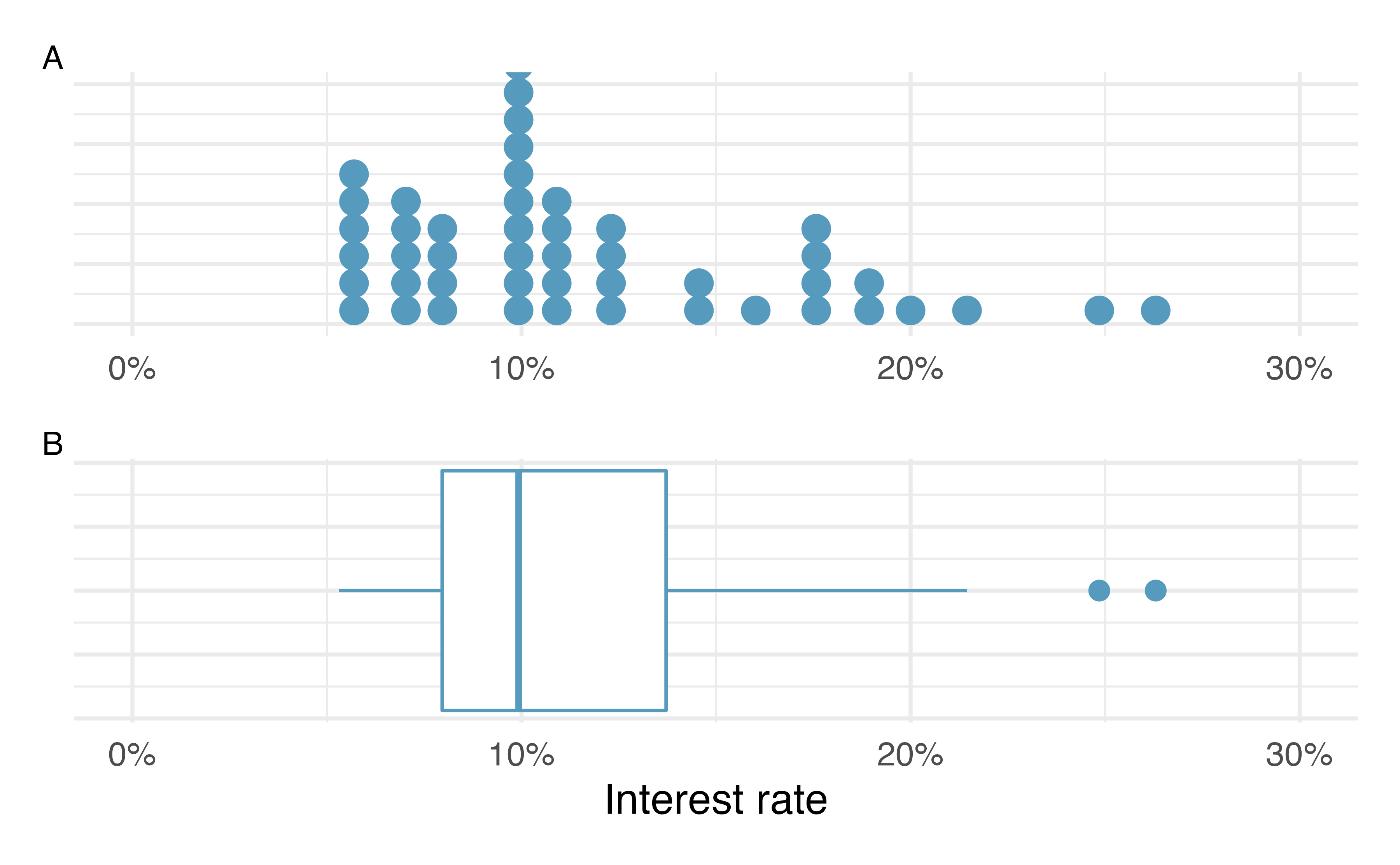

Figure 5.9 provides a dot plot alongside a box plot of the interest_rate variable from the loan50 data set.

Figure 5.9: Plot A shows a dot plot and Plot B shows a box plot of the distribution of interest rates from the loan50 dataset.

The dark line inside the box represents the median, which splits the data in half:

50% of the data fall below this value and 50% fall above it.

Since in the loan50 dataset there are 50 observations (an even number),

the median is defined as the average of the two observations closest to the

\(50^{th}\) percentile. Table 5.2 shows all

interest rates, arranged in ascending order.

We can see that the \(25^{th}\) and the \(26^{th}\) values are both

9.93, which corresponds to the dark line in

the box plot in Figure 5.9.

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 5.31 | 5.31 | 5.32 | 6.08 | 6.08 | 6.08 | 6.71 | 6.71 | 7.34 | 7.35 |

| 10 | 7.35 | 7.96 | 7.96 | 7.96 | 7.97 | 9.43 | 9.43 | 9.44 | 9.44 | 9.44 |

| 20 | 9.92 | 9.92 | 9.92 | 9.92 | 9.93 | 9.93 | 10.42 | 10.42 | 10.90 | 10.90 |

| 30 | 10.91 | 10.91 | 10.91 | 11.98 | 12.62 | 12.62 | 12.62 | 14.08 | 15.04 | 16.02 |

| 40 | 17.09 | 17.09 | 17.09 | 18.06 | 18.45 | 19.42 | 20.00 | 21.45 | 24.85 | 26.30 |

When there are an odd number of observations, there will be exactly one observation that splits the data into two halves, and in such a case that observation is the median (no average needed).

Median: the number in the middle.

If the data are ordered from smallest to largest, the median is the observation right in the middle. If there are an even number of observations, there will be two values in the middle, and the median is taken as their average.

Mathematically, if we denote the sample size by \(n\), then

- if \(n\) is odd, the median is the \([(n+1)/2]^{th}\) smallest value in the data set, and

- if \(n\) is even, the median is the average of the \((n/2)^{th}\) and \((n/2+1)^{th}\) smallest values in the data set.

The median is an example of a percentile. Since 50% of the data fall below the median, the median is the \(50^{th}\) percentile.

Percentiles.

The \(p^{th}\) percentile is a value such that \(p\)% of the data fall below that value. For example, 7.96 is the \(25^{th}\) percentile of the interest rates shown in Table 5.2 since 25% of the data fall below 7.96 (and 75% fall above).

The second step in building a box plot is drawing a rectangle to represent the middle 50% of the data. The length of the the box is called the interquartile range, or IQR for short. It, like the standard deviation, is a measure of variability in data. The more variable the data, the larger the standard deviation and IQR tend to be. The two boundaries of the box are called the first quartile (the \(25^{th}\) percentile) and the third quartile (the \(75^{th}\) percentile) , and these are often labeled \(Q_1\) and \(Q_3\), respectively47

Interquartile range (IQR).

The IQR interquartile range is the length of the box in a box plot. It is computed as \[ IQR = Q_3 - Q_1, \] where \(Q_1\) and \(Q_3\) are the \(25^{th}\) and \(75^{th}\) percentiles, respectively.

What percent of the data fall between \(Q_1\) and the median? What percent is between the median and \(Q_3\)?48

Extending out from the box, the whiskers attempt to capture the data outside of the box. The whiskers of a box plot reach to the minimum and the maximum values in the data, unless there are points that are considered unusually high or unusually low, which are identified as potential outliers by the box plot. These are labeled with a dot on the box plot. The purpose of labeling these points—instead of extending the whiskers to the minimum and maximum observed values—is to help identify any observations that appear to be unusually distant from the rest of the data. There are a variety of formulas for determining whether a particular data point is considered an outlier, and different statistical software use different formulas. A commonly used formula is that any value that is beyond \(1.5\times IQR\)49 away from the box is considered an outlier. These outlier cutoff values are sometimes called the fences. In a sense, the box is like the body of the box plot and the whiskers are like its arms trying to reach the rest of the data, up to the outliers.

In Figure 5.9, the upper whisker does not extend to the last two points, 24.85% and 26.3%, which are above \(Q_3 + 1.5\times IQR\), and so it extends only to the last point below this limit. The lower whisker stops at the minimum value in the data set, 5.31%, since there are no outliers on the lower end of the distribution.

Boxplots only display observed data values. The whiskers extend to actual data points—not the limits for outliers. That is, the fence values \(Q_1 - 1.5\times IQR\) and \(Q_3 + 1.5\times IQR\) should not be shown on the plot.

Outliers are extreme.

An outlier is an observation that appears extreme relative to the rest of the data. Examining data for outliers serves many useful purposes, including

- identifying strong skew in the distribution,

- identifying possible data collection or data entry errors, and

- providing insight into interesting properties of the data.

5.6 Describing and comparing quantitative distributions

As a review, when describing a scatterplot—the association between two quantitative variables, we look for the four features:

- Form

- Direction

- Strength

- Outliers

When asked to describe or compare univariate (single variable) quantitative distributions, we look for four features:

- Center

- Variability

- Shape

- Outliers

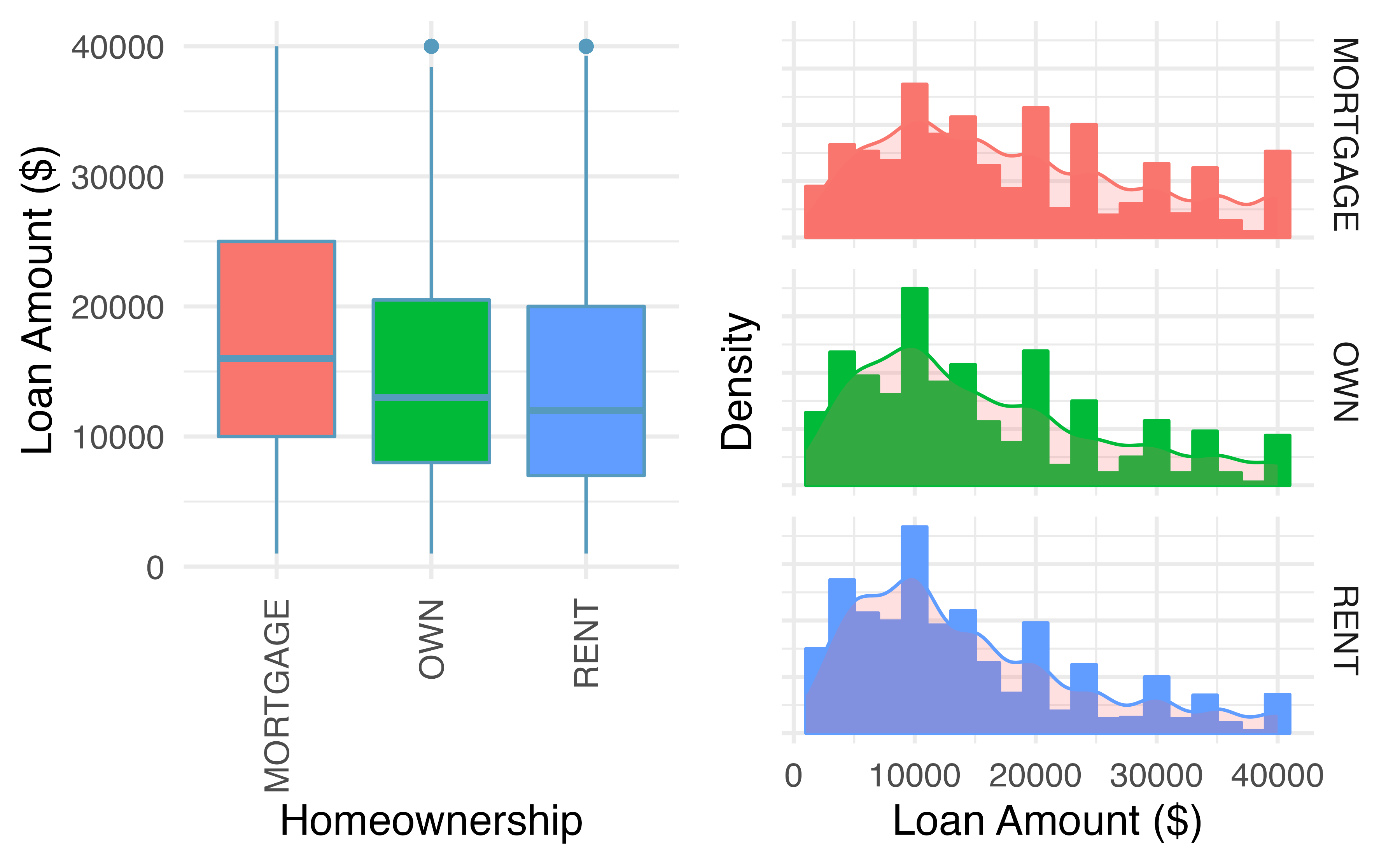

We can compare quantitative distributions by using side-by-side box plots,

or stacked histograms or dot plots. Recall that the loan50 data set represents a sample from a larger loan data set called loans.

This larger data set contains information on 10,000 loans made through Lending Club. Figure 5.10 examines the relationship between homeownership, which for the loans data can take a value of rent, mortgage (owns but has a mortgage), or own, and interest_rate. Note that homeownership

is a categorical variable and interest_rate is a quantitative variable.

Figure 5.10: Side-by-side box plots of loan interest rates by homeownership category and corresponding histograms.

We see immediately that some features are easier to discern in box plots, while others in histograms. Shape is shown more clearly in histograms, while center (as measured by the median) is easy to compare across groups in the side-by-side box plots.

Using Figure 5.10 write a few sentences comparing the distributions of loan amount across the different homeownership categories.

The median loan amount is higher for those with a mortgage (around $16,000) than for those who own or rent (around $12,000-$13,000). However, variability in loan amounts is similar across homeownership categories, with an IQR of around $15,000 and loans ranging from a few hundred dollars to $40,000. We see from the histograms that the distribution of loan amounts is skewed right for all three homeownership categories, which means the mean loan amount will be higher than the median loan amount. There are no apparent outliers in the mortgage category, but both the rent and own categories have outliers at $40,000.

Besides center, variability, shape, and outliers, another interesting feature in these distributions is the result of rounding. Loan amounts in the data set are often rounded to the nearest 100, so we see spikes on these values in the histogram—something that is not evident in the box plots.

5.7 Robust statistics

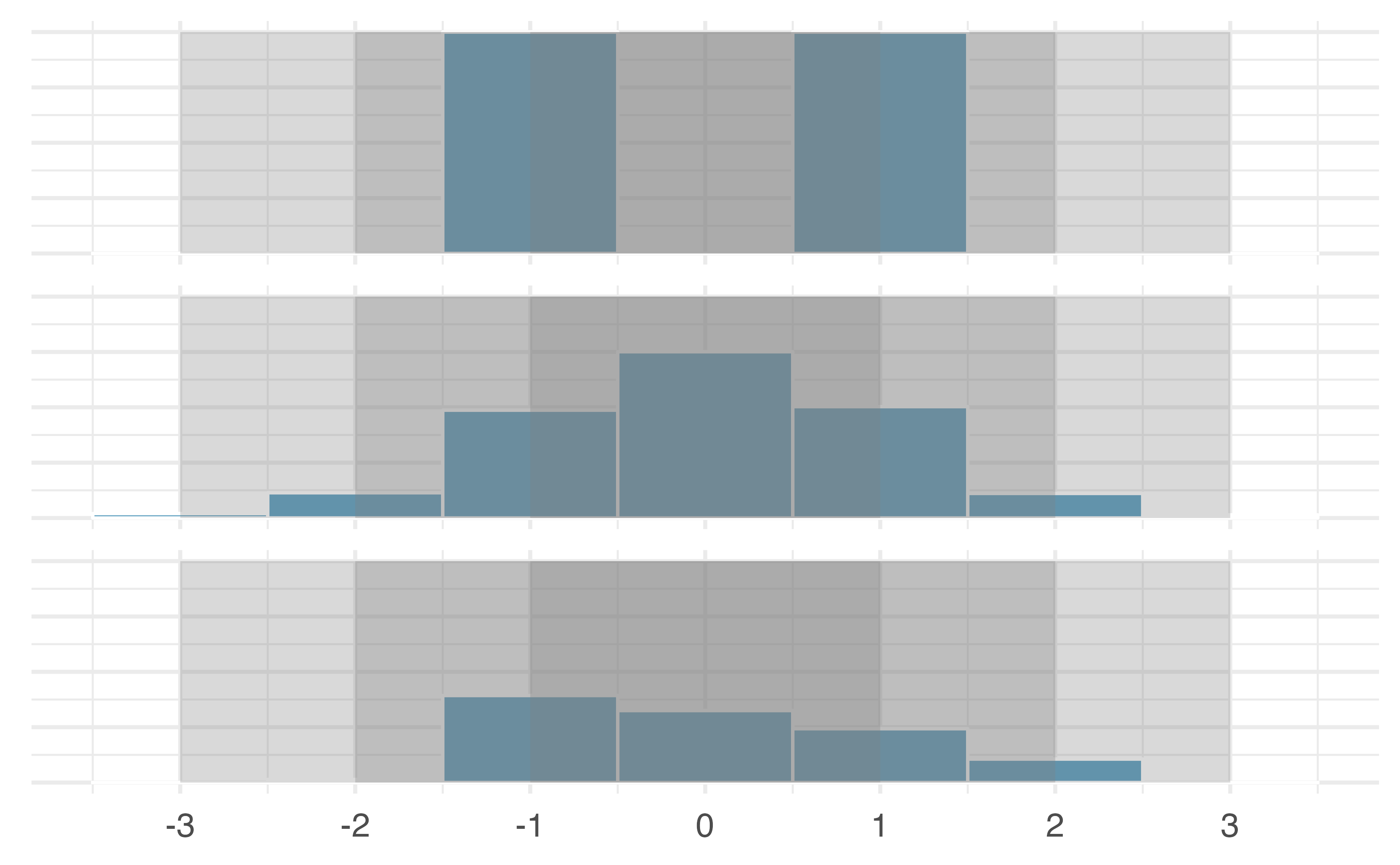

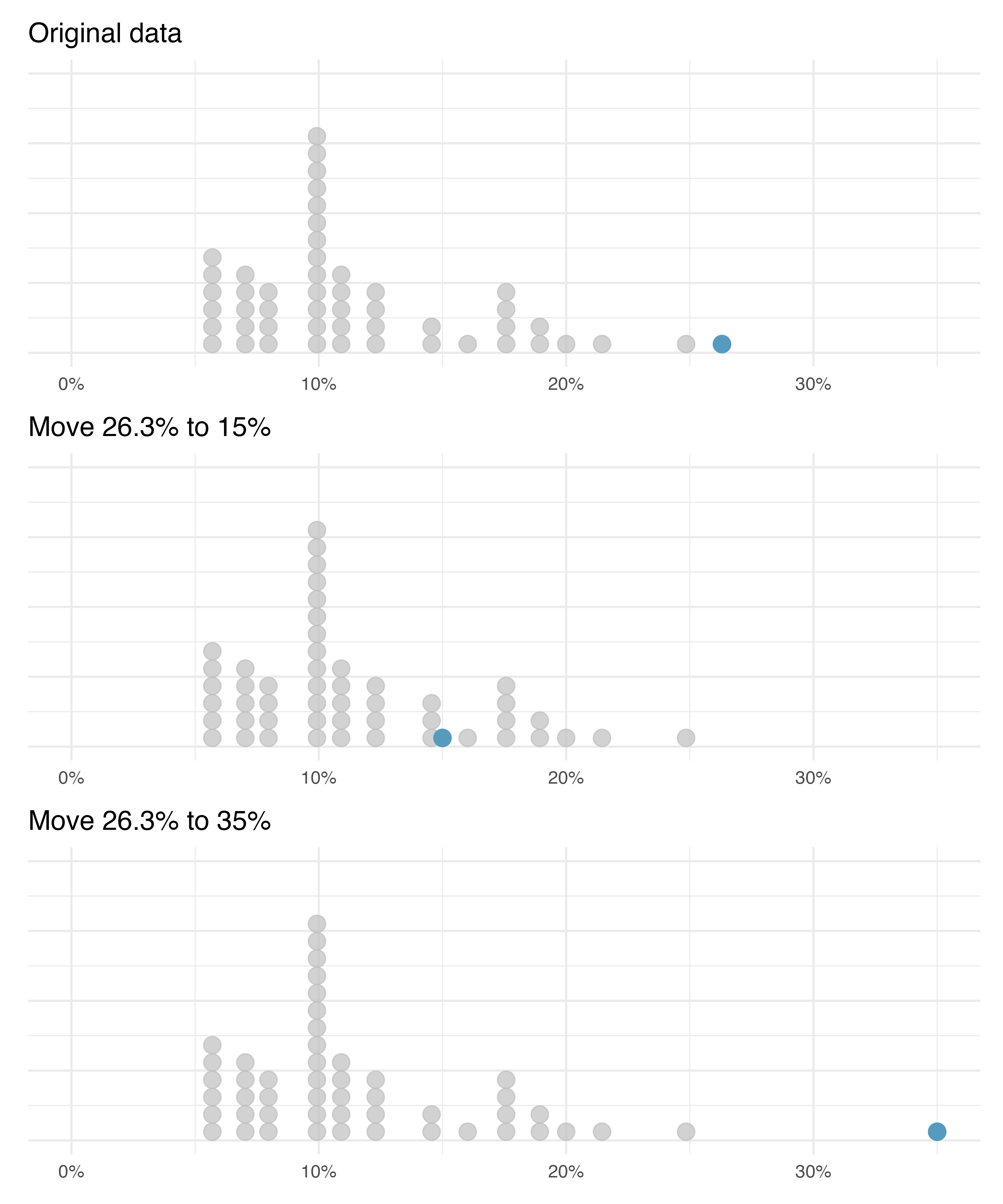

How are the sample statistics of the interest_rate data set affected by the observation, 26.3%?

What would have happened if this loan had instead been only 15%?

What would happen to these summary statistics if the observation at 26.3% had been even larger, say 35%?

These scenarios are plotted alongside the original data in Figure 5.11, and sample statistics are computed under each scenario in Table 5.3.

Figure 5.11: Dot plots of the original interest rate data and two modified data sets.

| Scenario | Median | IQR | Mean | SD |

|---|---|---|---|---|

| Original data | 9.93 | 5.75 | 11.6 | 5.05 |

| Move 26.3% to 15% | 9.93 | 5.75 | 11.3 | 4.61 |

| Move 26.3% to 35% | 9.93 | 5.75 | 11.7 | 5.68 |

- Which is more affected by extreme observations, the mean or median?

- Is the standard deviation or IQR more affected by extreme observations?51

The median and IQR are called robust statistics because extreme observations have little effect on their values—moving the most extreme value generally has little influence on these statistics. On the other hand, the mean and standard deviation are more heavily influenced by changes in extreme observations, which can be important in some situations. Additionally, the mean tends to get pulled in the direction of a distribution’s skewness, while the skewness has little affect on the median.

The median and IQR did not change under the three scenarios in Table 5.3. Why might this be the case?

The median and IQR are only sensitive to numbers near \(Q_1\), the median, and \(Q_3\). Since values in these regions are stable in the three data sets, the median and IQR estimates are also stable.

The distribution of loan amounts in the loan50 data set is right skewed, with a few large loans lingering out into the right tail.

If you were wanting to understand the typical loan size, should you be more interested in the mean or median?52

5.8 Transforming data (special topic)

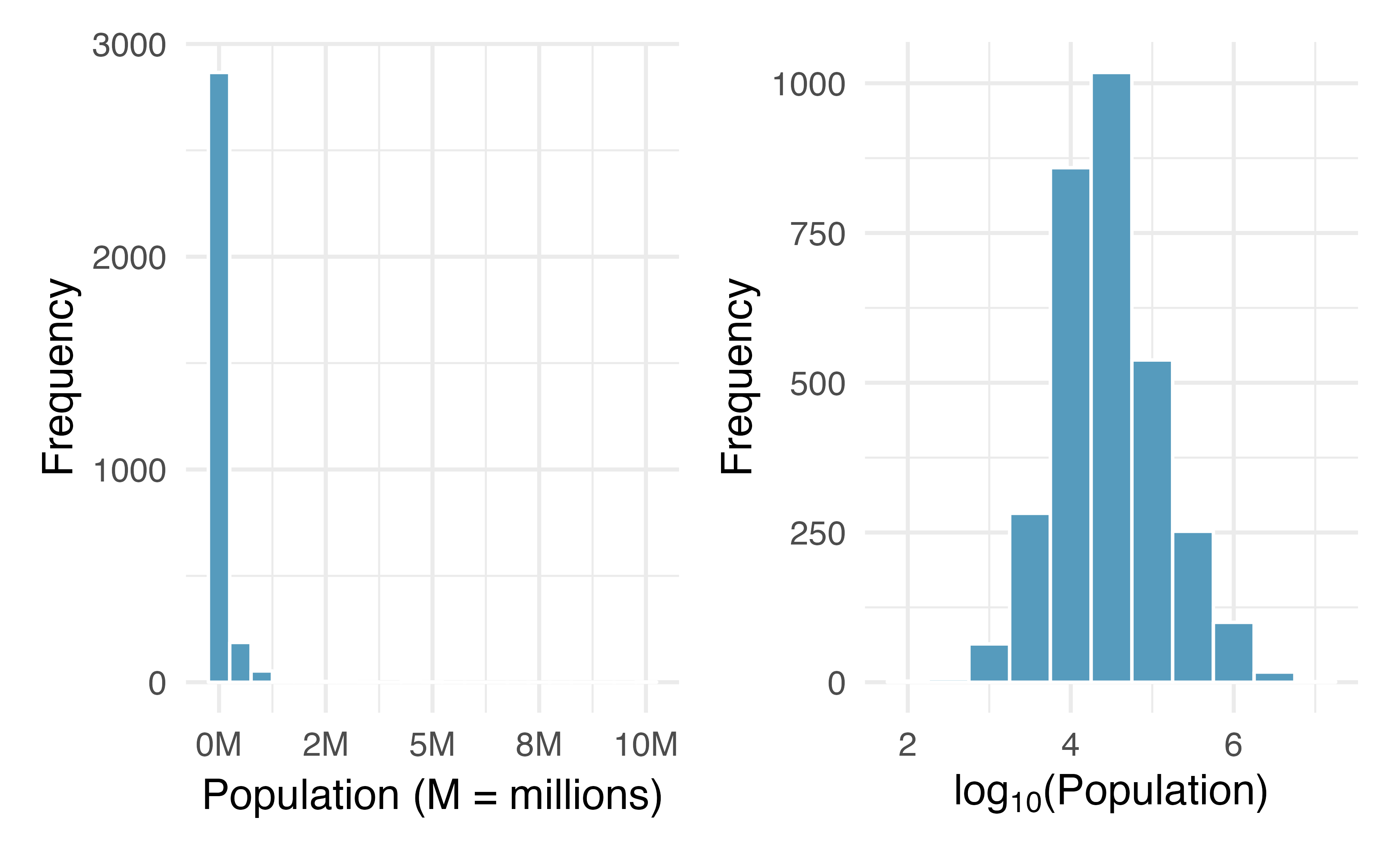

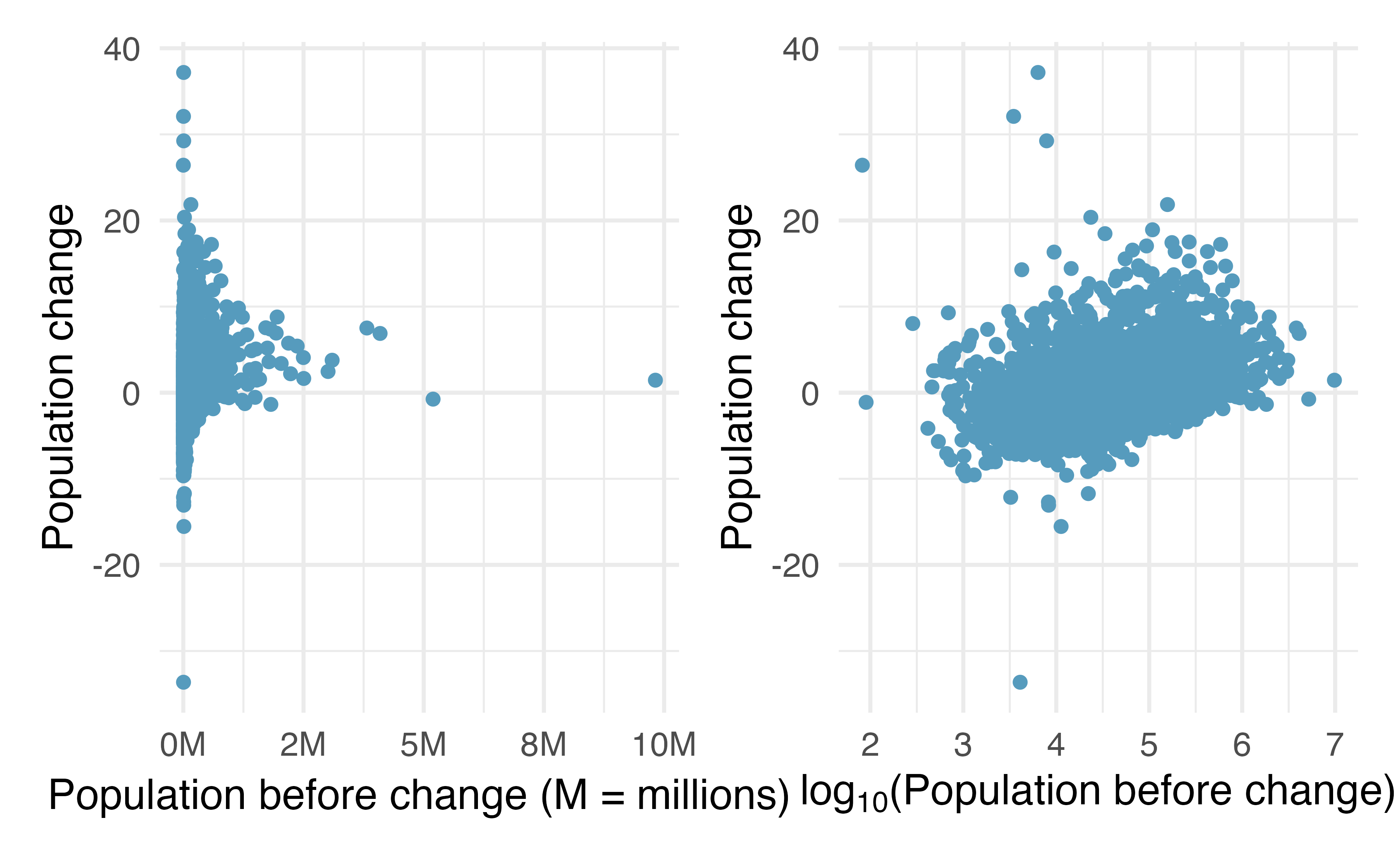

When data are very strongly skewed, we sometimes transform them so they are easier to model. A transformation is a rescaling of the data using a function.

Figure 5.12: Plot A: A histogram of the populations of all US counties. Plot B: A histogram of log\(_{10}\)-transformed county populations. For this plot, the x-value corresponds to the power of 10, e.g. 4 on the x-axis corresponds to \(10^4 =\) 10,000. Data are from 2017.

Consider the histogram of county populations shown in the left of Figure 5.12, which shows extreme skew. What is not so useful about this plot?

Nearly all of the data fall into the left-most bin, and the extreme skew obscures many of the potentially interesting details in the data.

There are some standard transformations that may be useful for strongly right skewed data where much of the data is positive but clustered near zero. For instance, a plot of the logarithm (base 10) of county populations results in the new histogram in Figure 5.12. This data is symmetric, and any potential outliers appear much less extreme than in the original data set. By reigning in the outliers and extreme skew, transformations like this often make it easier to build statistical models against the data.

Transformations can also be applied to one or both variables in a scatterplot. A scatterplot of the population change from 2010 to 2017 against the population in 2010 is shown in Figure 5.13. In this first scatterplot, it’s hard to decipher any interesting patterns because the population variable is so strongly skewed. However, if we apply a log\(_{10}\) transformation to the population variable, as shown in Figure 5.13, a positive association between the variables is revealed. In fact, we may be interested in fitting a trend line to the data when we explore methods around fitting regression lines in Chapter 6.

Figure 5.13: Plot A: Scatterplot of population change against the population before the change. Plot B: A~scatterplot of the same data but where the population size has been log-transformed.

Transformations other than the logarithm can be useful, too. For instance, the square root (\(\sqrt{\text{original observation}}\)) and inverse (\(\frac{1}{\text{original observation}}\)) are commonly used by data scientists. Common goals in transforming data are to see the data structure differently, reduce skew, assist in modeling, or straighten a nonlinear relationship in a scatterplot.

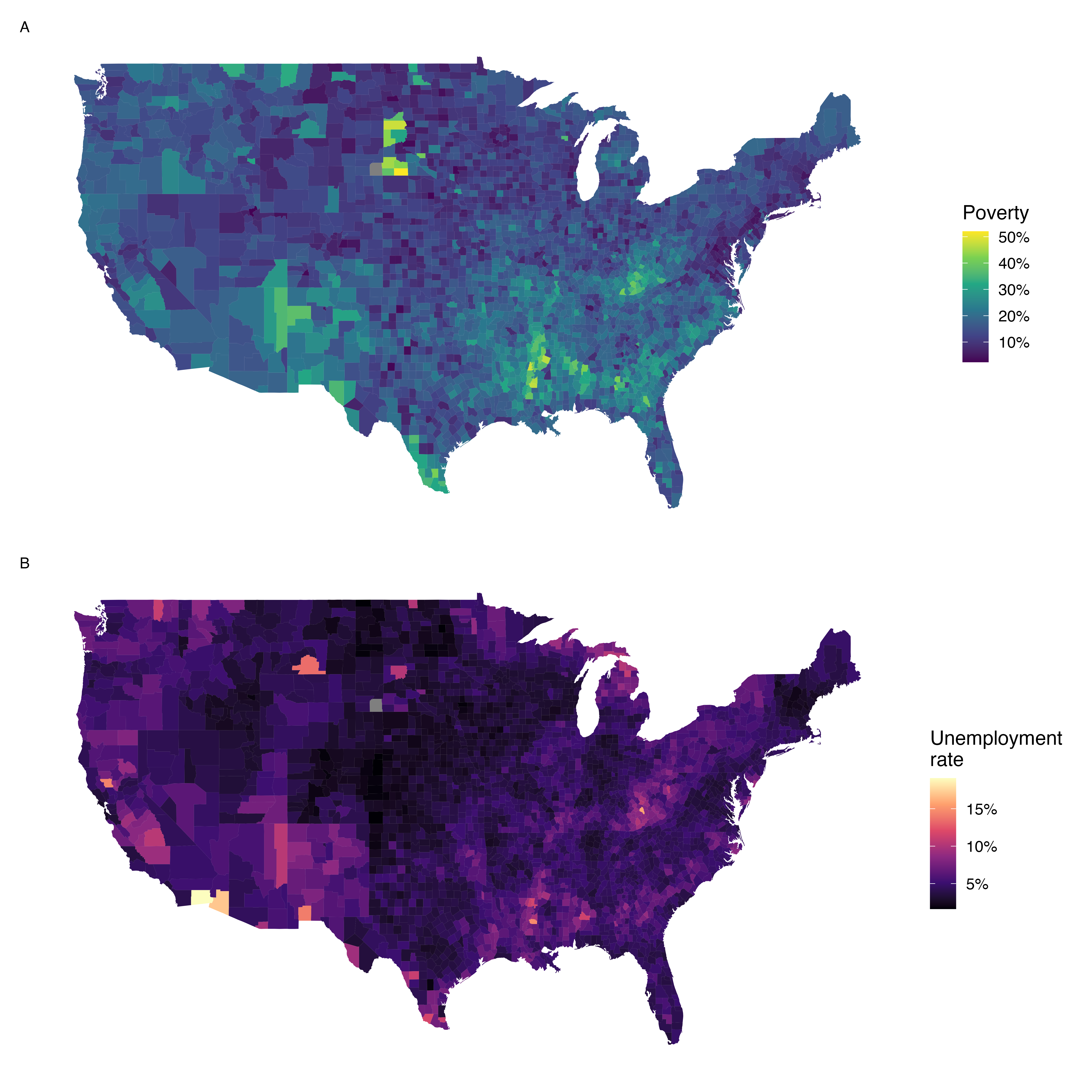

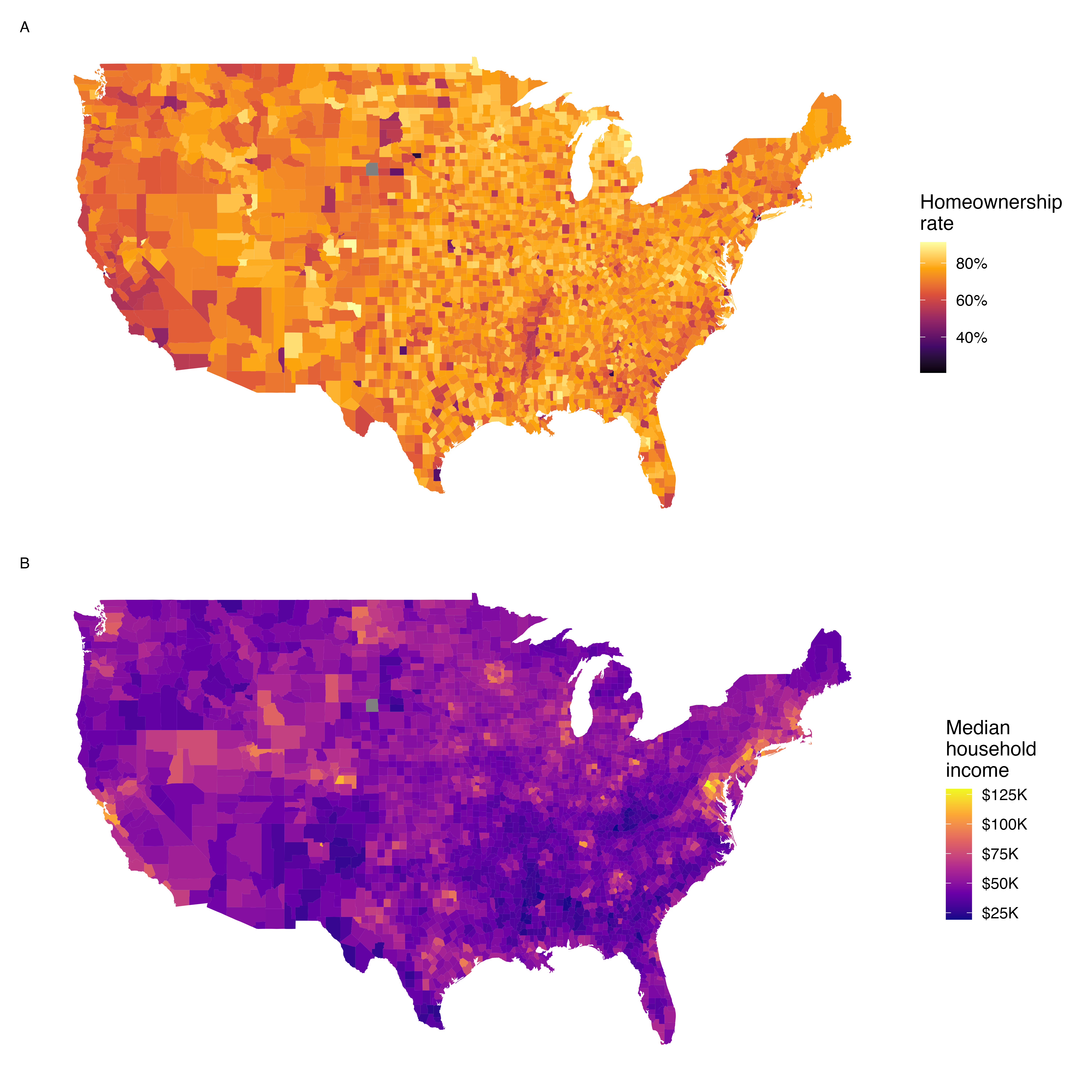

5.9 Mapping data (special topic)

The county data set offers many numerical variables that we could plot using dot plots, scatterplots, or box plots, but these miss the true nature of the data.

Rather, when we encounter geographic data, we should create an intensity map, where colors are used to show higher and lower values of a variable.

Figures 5.14 and 5.15 show intensity maps for poverty rate in percent (poverty), unemployment rate (unemployment_rate), homeownership rate in percent (homeownership), and median household income (median_hh_income).

The color key indicates which colors correspond to which values.

The intensity maps are not generally very helpful for getting precise values in any given county, but they are very helpful for seeing geographic trends and generating interesting research questions or hypotheses.

What interesting features are evident in the poverty and unemployment rate intensity maps?

Poverty rates are evidently higher in a few locations. Notably, the deep south shows higher poverty rates, as does much of Arizona and New Mexico. High poverty rates are evident in the Mississippi flood plains a little north of New Orleans and also in a large section of Kentucky. The unemployment rate follows similar trends, and we can see correspondence between the two variables. In fact, it makes sense for higher rates of unemployment to be closely related to poverty rates. One observation that stands out when comparing the two maps: the poverty rate is much higher than the unemployment rate, meaning while many people may be working, they are not making enough to break out of poverty.

What interesting features are evident in the median household income intensity map in Figure 5.15?53

Figure 5.14: Plot A: Intensity map of poverty rate (percent). Plot B: Intensity map of the unemployment rate (percent).

Figure 5.15: Plot A: Intensity map of homeownership rate (percent). Plot B: Intensity map of median household income ($1000s).

5.10 Chapter review

Summary

Fluently working with quantitaitve variables is an important skill for data analysts. In this chapter we have introduced different visualizations and numerical summaries applied to quantitative variables. The graphical visualizations are even more descriptive when two variables are presented simultaneously. We presented scatterplots, dot plots, histograms, and box plots. Quantitative variables can be summarized using the mean, median, quartiles, standard deviation, and variance.

Terms

We introduced the following terms in the chapter. If you’re not sure what some of these terms mean, we recommend you go back in the text and review their definitions. We are purposefully presenting them in alphabetical order, instead of in order of appearance, so they will be a little more challenging to locate. However you should be able to easily spot them as bolded text.

| average | intensity map | standard deviation |

| bimodal | interquartile range | strength |

| box plot | IQR | symmetric |

| data density | left skewed | tail |

| density plot | mean | third quartile |

| deviation | median | transformation |

| direction | multimodal | unimodal |

| distribution | outlier | variability |

| dot plot | outliers | variance |

| fences | percentile | weighted mean |

| first quartile | right skewed | whiskers |

| form | robust statistics | |

| histogram | scatterplot |

Key ideas

Two variables are associated when the behavior of one variable depends on the value of the other variable. Two quantitative variables are associated when a trend is apparent on a scatterplot. Recall from Chapter 1, association does not imply causation!

When describing the distribution of a single quantitative variable on a histogram, dot plot, or box plot, we look for (1) center, (2) variability, (3) shape, and (4) outliers.

When describing the relationship shown between two quantitative variables in a scatterplot, we look for (1) form, (2) direction, (3) strength, and (4) outliers.